Jason Rogers, PhD and Geertje van Bergen, PhD take us on a sensory deep dive into flavor perception, facial expression analysis, and food choice and consumption behavior.

Highlights

- Overview of facial expression analysis as a tool for evaluating consumer behavior

- Introduction to FaceReader software

- Understanding food choice and perception, and how these can help predict commercial success of food products

Webinar Summary

Dr. Rogers begins the webinar with an overview of what it means to assess facial expressions using an automated approach; specifically, it’s impartial, automated, and reproducible, and based on decades of academic research. He clarifies that it will not be as robust as a human observer and is not facial recognition or biometrics, before introducing FaceReader, software from Noldus that automatically detects facial expressions and classifies them.

“In addition to the expressions, we are also classifying the individual muscles in the face that make up those expressions.”

FaceReader works via three steps: it uses an algorithm to automatically find a face, creates a face model (active appearance model/deep face model), and lastly classifies the expression. The deep face model works by breaking up the face, creating low-, mid-, and high-level concepts to recreate individual facial parts, and using neural networks to put it all back together.

Dr. Rogers moves on to discuss the “Green Banana”, a sensory discrimination test similar to the Stroop test. They used FaceReader while sensory experts tasted a drink that was mismatched in flavor and color; results showed people who answered correctly had more confused faces, and those who answered incorrectly raised their eyebrows. A regression analysis demonstrated the facial muscles, called Action Units, significantly predicted the self-report outcome, concluding Dr. Rogers’ portion of the webinar.

“I would never say FaceReader should replace anything, but FaceReader is a nice supplement to . . . your respondents writing things down . . . now you have the ability to classify their expressions while they are writing it down and you can look at those matches or mismatches, and it might help uncover some hidden things you might have otherwise missed.”

Dr. van Bergen starts the second part of the webinar highlighting the complexity of food choice, as commercial success does not rely on taste alone. Things that affect food choice include product-related, person-related, and environment-related factors.

“If you’re eating with your family and your family members are vegans, you may not be eating meat products that evening even if you would have if you weren’t surrounded by the same people.”

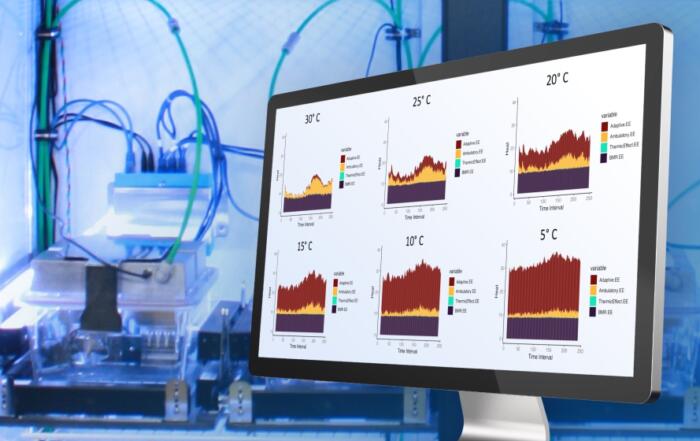

Dr. van Bergen’s group investigates the underlying process of food evaluations by assessing consumer expectations and using real-time measurement techniques. These can be physiological, behavioral, or expressive measures that stem from unconscious associations, such as facial expressions or bite/sip size. She explains that sensory perception is proactive; our brains generate predictions about what we see, hear, feel, smell, and taste based on memory, and this predictive processing saves energy as long as these predictions are not mismatched.

Food perception in context was discussed next. Essentially, food-context congruity had the strongest effect on food evaluations prior to tasting, but also implicit measures (such as amount of food eaten) were sensitive to general context effects (such as consumption environments).

“. . . food experiences are contextualized. The food memories we have include information about the situation that these foods were eaten in.”

Furthermore, studying novel food combinations revealed that providing additional information strengthens hedonic expectations. Telling participants about what they were going to be eating before tasting changed expectations significantly.

“. . . by having additional information available other than just sensory or liking evaluations, we hope to increase the predictive validity of these tests for product success in the market.”

Dr. van Bergen summarizes that her group is trying to observe the whole food experience to better predict which products may or may not become a commercial success.

Resources

Q&A

- Why do you think the label and premixed scores in the second experiment were so different?

- Does the FaceReader software allow for one calibration per participant?

- Can something similar to FaceReader be used to measure focus or a sense of effort? For example, using facial expression or muscle activation analysis for patient engagement in rehabilitation exercises.

- How can FaceReader be helpful in assessing a learning response while taking a course or learning something new online?

- How do you see this tool being used for future research studies?

- Does the software have the capability to integrate eye tracking?

To retrieve a PDF copy of the presentation, click on the link below the slide player. From this page, click on the “Download” link to retrieve the file.

Presenters

Senior Research Scientist

Noldus Information Technology

Consumer Scientist

Food, Health and Consumer Behaviour

Wageningen University and Research